AI-Assisted Development: From Autocomplete to Autonomous Coding

In AI 00, we built the engine — Transformers, attention, next-token prediction. In AI 01, we learned to drive it — latent space navigation, Chain of Thought, structured output.

Now it’s time to put that engine inside the machine you use every day: your code editor.

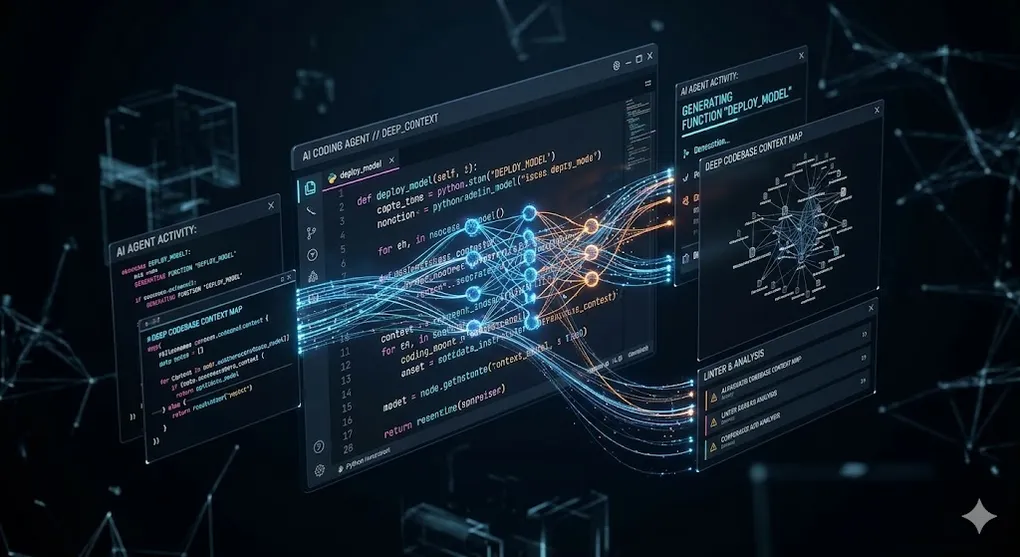

TL;DR: AI coding tools have evolved from single-line autocomplete to autonomous agents that read your codebase, plan changes across multiple files, and execute shell commands. This article compares five tools across four levels of capability — so you pick the right tool for every task.

┌─────────────────────────────────────────────────────┐

│ AI-Assisted Coding Evolution │

│ │

│ Level 1 │ Tab Completion (Copilot) │ ← One line│

│ Level 2 │ Chat + Edit (Cursor Chat) │ ← Q&A │

│ Level 3 │ Multi-file Compose (Composer)│ ← Orchestr│

│ Level 4 │ Agentic Coding (Claude Code) │ ← Autonomy│

│ │

│ Pick the level that matches your task complexity. │

└─────────────────────────────────────────────────────┘The question isn’t “which tool is best” — it’s “which tool is best for THIS task.” Fixing a typo? Tab completion. Refactoring a module? Chat. Migrating an entire codebase to a new framework? Agentic mode.

Article Map

I — Theory Layer (why AI coding tools work)

- The Paradigm Shift — From deterministic linters to probabilistic generators

- How Code LLMs Differ from Chat LLMs — Fill-in-the-Middle, context windows, latency

II — Tool Layer (deep comparison)

3. GitHub Copilot Deep Dive — Architecture, context assembly, Workspace

4. Cursor Deep Dive — Multi-model, @-mentions, Composer, .cursorrules

5. Claude Code Deep Dive — Terminal-native agent, agentic loop, CLAUDE.md

6. Windsurf Deep Dive — Cascade flow, free tier strategy

7. Aider Deep Dive — Open-source, git-native, local models

8. Head-to-Head Comparison — Feature matrix across all five

III — Engineering Layer (production workflow) 9. Effective Prompting for Code — Specificity hierarchy, anti-patterns 10. AI-Assisted Code Review — PR analysis, CodiumAI, review agents 11. Cost, Privacy & Enterprise Security — SOC 2, code leak risks, compliance 12. Integration into CI/CD — AI in pipelines, test generation, documentation 13. Key Takeaways — Compressed insights

1. The Paradigm Shift: Static Tooling → Probabilistic Tooling

For forty years, developer tools were deterministic. A linter checks rules. A formatter applies rules. An autocomplete engine matches tokens from a symbol table. Input X always produces output Y.

Then the Transformer arrived (AI 00 §6), and everything changed.

1980s Manual coding → compile → fix errors → repeat

2000s IDE + autocomplete → compile → fix → repeat

2015s IDE + linter + formatter → compile → fix → repeat

2023+ IDE + LLM → generate → review → shipThe shift isn’t incremental — it’s categorical:

| Era | Tool Type | Nature |

|---|---|---|

| Pre-2021 | Static analysis, snippets | Deterministic — rules humans wrote |

| 2021+ | Code LLMs | Probabilistic — patterns the model learned |

This maps directly to the two schools of AI from AI 00 §1.2:

- Static tooling = Symbolism (explicit rules, hand-crafted logic)

- LLM tooling = Connectionism (learned patterns, statistical inference)

The key insight: the developer’s role shifts from “writer” to “reviewer.” You spend less time typing boilerplate and more time evaluating whether the generated code is correct, efficient, and secure. This is a fundamental change in how software engineers create value.

🔧 Engineer’s Note: This “writer → reviewer” shift has a counterintuitive implication: senior engineers benefit MORE from AI tools than juniors. Why? Because reviewing code requires the judgment that comes from experience. A senior engineer spots a subtle race condition in generated code instantly; a junior might accept it. AI amplifies existing skill — it doesn’t replace it.

2. How Code LLMs Differ from Chat LLMs

Not all LLMs are created equal. The models behind Copilot and Cursor are trained differently from the ChatGPT you use for conversation.

2.1 Fill-in-the-Middle (FIM)

Chat LLMs generate text left-to-right. You provide a prompt, the model continues from the end. But code completion requires something different — you need the model to fill in code between existing lines.

This is the Fill-in-the-Middle training paradigm:

Standard left-to-right (Chat LLM):

[prompt tokens] → generate →→→→ [completion]

Fill-in-the-Middle (Code LLM):

[prefix tokens] <FILL> [suffix tokens]

↓

Model generates infill tokens

↓

[prefix] + [generated] + [suffix]FIM is why Copilot can complete code inside a function body, not just at the end of a file. The model sees both what comes before AND after the cursor, enabling contextually-aware insertion.

Formally, while a standard autoregressive LLM generates:

FIM restructures the input as a three-part concatenation:

Where denotes the special token concatenation: [PREFIX] P [SUFFIX] S [MIDDLE], and the model generates to be inserted between prefix and suffix. During training, the model learns to “fill in the blank” by attending to both past () and future () context simultaneously.

Connection to AI 00 §6.2: In the attention mechanism, computes the relevance between every token pair. With FIM, the suffix tokens provide additional vectors that the infill tokens can attend to. The model literally “sees the future” of the code it’s inserting into.

2.2 Context Window as Codebase Window

When you use Cursor or Copilot, the model doesn’t see your entire project. It sees a carefully curated context window — a subset of your codebase assembled by the tool’s context engine:

Context Assembly (what the model actually "sees"):

┌─ Current file (cursor position) ──────── HIGH priority

├─ Open tabs (weighted by language match) ─ MEDIUM priority

├─ Import graph (type definitions) ──────── MEDIUM priority

├─ Recently edited files ────────────────── LOW priority

└─ Codebase index (semantic search) ─────── ON DEMANDThis assembly strategy is critical because context windows are finite (4K–200K tokens depending on the model). What the tool chooses to include — and exclude — determines the quality of suggestions.

Connection to AI 01 §3.4 (“Lost in the Middle”): The same attention degradation that affects long prompts affects code context. Information in the middle of a large context window gets less attention. This is why most tools place the current cursor position (most relevant) at the end of the context — the recency bias of decoder-only models works in your favor.

2.3 The Latency Constraint

There’s a constraint that doesn’t exist in chat: autocomplete must be fast.

Human perception defines three latency thresholds that every AI coding tool must respect:

Latency Thresholds for Code Completion:

< 100ms │ Feels "instant" — seamless typing flow

│ Human perception limit for "instantaneous"

│ Only achievable with local models or edge inference

─ ─ ─ ─ ─│─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─

100-200ms │ Acceptable — slight pause, flow unbroken

│ Target zone for cloud-based autocomplete

│ This is where Copilot/Cursor aim

─ ─ ─ ─ ─│─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─

200-500ms │ Noticeable lag — starts to feel "slow"

│ Developer begins to type ahead of suggestions

│ Partially breaks the cognitive flow

─ ─ ─ ─ ─│─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─

> 500ms │ Flow-breaking — developer enters "waiting" mode

│ The "Flow State" (Csikszentmihalyi) is interrupted

│ Developers start ignoring suggestions at this pointThis creates a fascinating engineering tradeoff:

| Approach | Latency | Quality | Where |

|---|---|---|---|

| Local SLM (DeepSeek-Coder 7B on GPU) | ~50-100ms | Good for completion | On your machine |

| Cloud small model (speculative draft) | ~100-200ms | Good | Copilot/Cursor inline |

| Cloud large model (GPT-4o, Claude) | ~500-2000ms | Excellent | Chat/Composer mode |

Speculative decoding bridges this gap: a small, fast “draft model” generates candidate tokens, then a larger model verifies them in a single forward pass. If the draft was correct (it usually is for common patterns), you get large-model quality at small-model speed.

🔧 Engineer’s Note: This is a counterintuitive insight: for autocomplete specifically, a local 7B model often provides a better experience than a cloud-based GPT-4o. Not because it’s smarter — it’s objectively worse at complex reasoning — but because it responds in 50ms instead of 300ms. The quality gap matters less for single-line completions (where most suggestions are common patterns anyway) and more for complex multi-step tasks. This is why the “Level” framework matters: use fast/local for Level 1 (completion), cloud/large for Level 3-4 (compose/agent).

🔧 Engineer’s Note: Copilot’s inline suggestions feel different from Copilot Chat because they literally use different models. Inline uses a smaller, faster model optimized for the 100-200ms latency target. Chat uses GPT-4o optimized for quality. Same underlying architecture, different engineering tradeoffs — just like how a sports car engine and a truck engine are both combustion engines designed for different jobs.

3. GitHub Copilot Deep Dive

What it is: An AI coding assistant by GitHub/Microsoft, integrated as a VS Code extension (and other editors). The original mainstream AI coding tool.

3.1 Architecture

┌──────────────────────────────────────────────┐

│ VS Code Extension (Client) │

│ │ │

│ ├─ Context Assembly Engine │

│ │ ├── Current file + cursor position │

│ │ ├── Open tabs (language-weighted) │

│ │ ├── Import graph + type definitions │

│ │ └── Recently edited files │

│ │ │

│ ├─ Suggestion UI (inline ghost text) │

│ └─ Chat Panel (Copilot Chat) │

│ │ │

│ ▼ │

│ GitHub Copilot API │

│ ├── Codex/GPT-4o (for chat) │

│ └── Custom code model (for completion) │

└──────────────────────────────────────────────┘3.2 Three Modes of Operation

| Mode | Interface | Model | Best For |

|---|---|---|---|

| Inline Suggestions | Ghost text, Tab to accept | Fast/small model | Line-level completion |

| Copilot Chat | Side panel | GPT-4o | Explanations, debugging, refactoring |

| Copilot Workspace | Full-page UI | GPT-4o | Plan → implement → validate (agent preview) |

Copilot Workspace is particularly interesting — it’s GitHub’s early attempt at agentic coding. Given an issue, it:

- Plans the changes needed

- Implements them across files

- Validates with tests

This is a preview of the agent architecture we’ll explore in AI 05.

3.3 Strengths & Weaknesses

| ✅ Strengths | ❌ Weaknesses |

|---|---|

| Deepest GitHub integration (issues, PRs, repos) | Single model (no model choice) |

| Massive user base → battle-tested | Limited multi-file editing |

| Enterprise-ready (SOC 2, no training on code) | Context assembly is a black box |

| Works in 10+ editors | Inline suggestions can feel noisy |

🔧 Engineer’s Note: Copilot’s reported “40% acceptance rate” is misleading. It only counts full Tab presses. Many developers accept a suggestion, then immediately edit it — these partially-used suggestions aren’t tracked. Real-world utility is significantly higher than the headline number suggests.

3.4 Real-World Workflow: Copilot in Action

Here’s what Copilot looks like in a typical development session:

Workflow: Adding a new API endpoint

Step 1: Type function signature

│ async function getUser(id: string): Promise<User> {

│ ↑

│ Copilot sees: function name + param + return type

│ Copilot suggests: full implementation (DB query, error handling)

│ Developer: Tab (accept), then review

│

Step 2: Write a comment

│ // validate that the user has admin permissions

│ ↑

│ Copilot generates: complete permission check logic

│ based on patterns in other files

│

Step 3: Open Copilot Chat for complex logic

│ "Explain why this recursive function might stack overflow

│ and suggest a tail-recursive alternative"

│ ↑

│ Copilot Chat: detailed explanation + refactored code🔧 Engineer’s Note: The most effective Copilot pattern is “comment-driven development” — write a comment describing what you want, let Copilot generate the implementation, then review and refine. This leverages AI 01’s core insight: clear natural language instructions narrow the latent space.

4. Cursor Deep Dive

What it is: A custom fork of VS Code with AI integrated at every level — not just a plugin bolted on top, but a fundamentally redesigned editor.

4.1 Architecture

┌─────────────────────────────────────────────────┐

│ Cursor (Custom VS Code Fork) │

│ │ │

│ ├─ Context Engine │

│ │ ├── @file, @folder — explicit includes │

│ │ ├── @codebase — semantic search │

│ │ ├── @web — live internet search │

│ │ ├── @docs — documentation lookup │

│ │ └── Local embedding index (offline) │

│ │ │

│ ├─ Model Router │

│ │ ├── GPT-4o │

│ │ ├── Claude 3.5 Sonnet │

│ │ ├── Gemini │

│ │ └── Custom models via API │

│ │ │

│ ├─ Chat Panel — single-file edits │

│ ├─ Composer — multi-file orchestrated edits │

│ └─ Apply Engine — diff-based code application │

└─────────────────────────────────────────────────┘4.2 Key Differentiators

@-mentions for Context Control: Instead of hoping the tool includes the right files, you explicitly tell it:

@file:utils.ts @file:types.ts

Refactor the calculateTotal function to handle currency conversion.

Use the CurrencyRate type from types.ts.This is Context Engineering applied to code — the same principle from AI 01 §2.3 (Context component), but with IDE-specific tools for precision.

Composer Mode: Cursor’s Composer can plan and execute changes across multiple files simultaneously. You describe a feature in natural language, and Composer:

- Identifies which files need changing

- Plans the changes

- Shows you a diff preview

- Applies changes on approval

Codebase Indexing: Cursor builds a local embedding index of your entire project. When you type @codebase, it performs semantic search (cosine similarity over embeddings — exactly the mechanism from AI 00 §5.5) to find relevant code, even if you don’t know the file name.

Connection to AI 03: This local embedding index is a mini RAG system. Cursor embeds your codebase into vectors, stores them locally, and retrieves relevant chunks based on semantic similarity. When we cover RAG in AI 03, remember — you’ve already been using it every time you type

@codebase.

4.3 .cursorrules — Project-Level Prompt Engineering

This is where AI 01 meets AI 02. A .cursorrules file is a System Prompt for your project:

# .cursorrules example

You are an expert TypeScript/React developer.

## Code Style

- Use functional components with hooks

- Prefer named exports over default exports

- Use zod for runtime validation

## Architecture

- All API calls go through /lib/api/

- State management via Zustand (not Redux)

- Use server components by default (Next.js App Router)

## Testing

- Write tests for every public function

- Use vitest, not jest

- Mock external APIs with mswEvery interaction with Cursor Chat or Composer automatically includes this file as a system prompt prefix. It’s the Persona component from AI 01 §2.2 — a persistent “role definition” that shapes every response.

🔧 Engineer’s Note:

.cursorrulesis essentially fine-tuning at the prompt level — you’re programming the model’s default behavior for your codebase without touching any weights. Investing 30 minutes in a good.cursorrulesfile saves thousands of tokens across every interaction, and produces dramatically more consistent code.

4.4 Team Guide: Building Your .cursorrules / CLAUDE.md

For teams adopting AI coding tools, a well-structured config file is the single highest-ROI investment you can make. Here’s a battle-tested template:

# ═══════════════════════════════════════════════════

# PROJECT CONFIG TEMPLATE

# Save as: .cursorrules (for Cursor) or CLAUDE.md (for Claude Code)

# ═══════════════════════════════════════════════════

# §1 — Identity & Role

You are a senior [language] developer working on [project name].

You write clean, production-ready code. You never leave TODOs.

# §2 — Tech Stack (pin versions to avoid hallucinated APIs)

- Runtime: Node.js 20 / Python 3.12

- Framework: Next.js 14 App Router / FastAPI 0.109

- ORM: Prisma 5.8 / SQLAlchemy 2.0

- Testing: vitest + msw / pytest + httpx

- Styling: Tailwind CSS 3.4

# §3 — Architecture Rules

- All API calls go through /lib/api/ (never call fetch directly)

- Use server components by default, 'use client' only when needed

- All database access through repository pattern in /lib/db/

- Never import from ../../../ — use @/ path aliases

# §4 — Code Conventions

- Use named exports (no default exports)

- All functions that can fail return Result<T, AppError>

- Error messages must be user-facing strings, not stack traces

- Use Zod schemas for ALL external input validation

- Naming: camelCase for functions, PascalCase for types/components

# §5 — Testing Standards

- Every public function needs: 1 happy path + 1 error case + 1 edge case

- Use msw (Mock Service Worker) for API mocking

- No real network calls in tests

- Minimum 80% coverage for new code

# §6 — Security Rules

- Never hardcode secrets — use env variables via @/lib/config

- Sanitize all user inputs before database queries

- Use parameterized queries only (no string interpolation in SQL)

- Never commit .env files

# §7 — Commands (Claude Code / Aider specific)

- `npm run dev` — start dev server (port 3000)

- `npm test` — run full test suite

- `npm run lint` — ESLint + Prettier check

- `npm run typecheck` — TypeScript strict mode checkTeam onboarding checklist:

When a new engineer joins:

□ Clone repo → .cursorrules / CLAUDE.md already included

□ AI tool immediately understands project conventions

□ First AI-assisted PR follows team patterns automatically

□ No "which framework do we use?" questions to AI — it already knows

Maintenance cadence:

□ Review .cursorrules in sprint retro (monthly)

□ Update when adding new libraries or changing patterns

□ Version control it — .cursorrules IS code infrastructure🔧 Engineer’s Note: Treat

.cursorruleslike a living document, not a write-once config. The best teams review it monthly during sprint retros. When someone notices that AI keeps generating Redux code despite using Zustand — that’s a missing rule in.cursorrules. Every recurring correction is a signal that your config file needs an update.

4.5 Real-World Workflow: Cursor Composer in Action

Here’s a realistic multi-file task using Cursor Composer:

Task: "Add a /api/reports endpoint that generates PDF reports

from the database"

─── Composer Input ───────────────────────────────────

@file:src/routes/index.ts

@file:src/services/database.ts

@file:src/types/report.d.ts

@docs:PDFKit

Add a GET /api/reports/:id endpoint that:

1. Queries the report data from the database

2. Generates a PDF using PDFKit

3. Returns the PDF as a downloadable file

4. Follow existing error handling patterns in routes/index.ts

────────────────────────────────────────────────

─── Composer Output ──────────────────────────────────

Modified files (shown as diffs):

✅ src/routes/reports.ts (NEW) — route handler

✅ src/services/pdf.ts (NEW) — PDF generation service

✅ src/routes/index.ts (EDIT) — added route import

✅ src/types/report.d.ts (EDIT) — added ReportPDF type

✅ package.json (EDIT) — added pdfkit dependency

Developer reviews each diff → Accept / Reject / Edit eachThe critical difference from ChatGPT or Claude Chat: Composer shows you exact file diffs that you can accept, reject, or modify per-file. It’s the difference between “here’s some code” and “here are the precise changes to your project.”

🔧 Engineer’s Note: The

@docsmention is underrated. When Cursor pulls in library documentation (e.g.,@docs:Prisma), it dramatically reduces hallucination about API signatures. Without it, the model guesses function names from training data — which may be from an older version.

5. Claude Code (CLI Agent) Deep Dive

What it is: A terminal-native AI agent by Anthropic. Not an IDE plugin — a standalone CLI that reads your codebase, plans changes, and executes shell commands autonomously.

5.1 The Key Distinction

Copilot / Cursor: Claude Code:

┌──────────────┐ ┌──────────────┐

│ IDE Plugin │ │ Terminal CLI │

│ (GUI) │ │ (Agentic) │

│ │ │ │

│ Human types │ │ Human sets │

│ → AI assists │ │ goal → AI │

│ │ │ plans and │

│ IDE-driven │ │ executes │

└──────────────┘ └──────────────┘5.2 Why Claude? The Benchmark Story

Claude Code isn’t model-agnostic — it’s built exclusively on Claude 3.5 Sonnet. Why? Because Sonnet has emerged as the benchmark leader for code generation tasks:

| Benchmark | Claude 3.5 Sonnet | GPT-4o | Gemini 1.5 Pro |

|---|---|---|---|

| HumanEval (Python synthesis) | 92.0% | 90.2% | 84.1% |

| HumanEval+ (harder variants) | 85.2% | 80.6% | — |

| SWE-bench (real GitHub issues) | 49.0% | 33.2% | — |

| MBPP+ (multi-step Python) | 87.6% | 83.0% | — |

The SWE-bench result is particularly significant: it measures the model’s ability to solve real-world GitHub issues — reading a codebase, understanding the bug, and writing a working fix. This is exactly the agentic coding workflow. Claude 3.5 Sonnet solves nearly half of real GitHub issues autonomously, far ahead of GPT-4o.

This is why Claude Code chose to build exclusively on Claude rather than offering model choice — for the agentic coding use case specifically, it’s the strongest model available.

🔧 Engineer’s Note: HumanEval measures “can the model write a correct function from a docstring.” SWE-bench measures “can the model read a real codebase, understand a bug report, and submit a working fix.” The gap between these benchmarks tells you everything: models are good at writing isolated functions (HumanEval ~90%+), but much worse at navigating real codebases (SWE-bench ~33-49%). This is why agentic tools like Claude Code need the ReAct loop — the model alone isn’t enough; it needs to iterate.

5.3 The Agentic Loop

Claude Code implements the full Perceive → Plan → Act → Observe loop that we’ll formalize in AI 05:

User: "Add authentication to this Express API using JWT"

│

▼ Perceive: reads project structure, package.json, existing routes

│

▼ Plan: "I need to:

│ 1. Install jsonwebtoken and bcrypt

│ 2. Create auth middleware

│ 3. Add login/register routes

│ 4. Protect existing routes"

│

▼ Act: runs npm install → creates files → writes code

│

▼ Observe: runs tests, checks for errors

│

▼ Iterate: fixes TypeScript errors, adjusts middleware

│

▼ Complete: "Authentication added. Here's what I did..."This is fundamentally different from Copilot/Cursor. You’re not editing code with AI assistance — the AI is editing code with your oversight. The developer’s role shifts from operator to supervisor.

5.4 CLAUDE.md — The System Prompt for Your Codebase

Just as Cursor has .cursorrules, Claude Code uses CLAUDE.md:

# CLAUDE.md

## Project: E-commerce API

- Runtime: Node.js 20 + TypeScript 5.3

- Framework: Express.js with Zod validation

- Database: PostgreSQL via Prisma ORM

- Testing: vitest + supertest

## Commands

- `npm run dev` — start dev server

- `npm test` — run tests

- `npm run lint` — check linting

## Conventions

- All routes return { data, error, meta } shape

- Use Zod schemas for request validation

- Never commit .env filesConnection to AI 01 §2.2:

CLAUDE.mdis the AI 01 Persona concept applied at the codebase level. Instead of defining “who the model is” per conversation, you define it once and it persists across every interaction.

5.5 When to Use Claude Code vs. Cursor

| Scenario | Best Tool | Why |

|---|---|---|

| Quick edit, single file | Cursor | Faster feedback, visual diff |

| Multi-file refactoring with context | Cursor Composer | Visual diffs, model choice |

| Large feature implementation | Claude Code | Agentic loop, shell execution |

| Codebase migration | Claude Code | Plans across entire project |

| Debugging with test feedback | Claude Code | Runs tests, reads output, iterates |

🔧 Engineer’s Note: The complexity threshold is roughly: if the task takes more than 3-4 Chat messages in Cursor, switch to Claude Code. Claude Code thrives on tasks where the “plan → execute → verify → fix” loop needs multiple iterations — and SWE-bench proves it’s built for exactly that workflow.

6. Windsurf Deep Dive

What it is: An AI-native IDE by Codeium (rebranded from Codeium’s extension). A VS Code fork — like Cursor — but with a more opinionated approach to multi-step AI workflows.

6.1 Cascade Flow

Windsurf’s signature feature is Cascade — a multi-step agent flow that runs directly in the IDE:

User describes task

│

▼ Cascade Agent:

├── Step 1: Analyze codebase context

├── Step 2: Plan changes

├── Step 3: Execute file edits

├── Step 4: Run terminal commands (if needed)

└── Step 5: Verify and report

All within the IDE — no terminal switching requiredCascade sits between Cursor’s Composer (multi-file but still chat-driven) and Claude Code’s full agent (terminal-based autonomous execution). It’s agentic, but contained within the familiar IDE experience.

6.2 Free Tier Strategy

Windsurf’s go-to-market differentiator is its generous free tier — a direct challenge to Copilot’s $10-19/month:

| Tier | Price | Key Limits |

|---|---|---|

| Free | $0 | Limited Cascade credits, basic models |

| Pro | $15/mo | Unlimited Cascade, GPT-4o + Claude access |

6.3 Strengths & Weaknesses

| ✅ Strengths | ❌ Weaknesses |

|---|---|

| Good default context management | Less model flexibility than Cursor |

| Cascade flow feels intuitive | Smaller ecosystem and community |

| Competitive pricing | Less mature codebase indexing |

| Clean UI | Fewer @-mention options |

7. Aider Deep Dive

What it is: An open-source, terminal-based AI coding assistant. Git-native, supports 20+ models, and can run with fully local models for zero data leakage.

7.1 Architecture

┌──────────────────────────────────┐

│ Aider CLI │

│ │ │

│ ├─ Git Integration (native) │

│ │ └── Every edit = git commit│

│ │ │

│ ├─ Model Router │

│ │ ├── OpenAI (GPT-4o) │

│ │ ├── Anthropic (Claude) │

│ │ ├── Ollama (local Llama) │

│ │ ├── DeepSeek │

│ │ └── 20+ other providers │

│ │ │

│ ├─ File Watcher │

│ │ └── Tracks changed files │

│ │ │

│ └─ Edit Format │

│ ├── Whole file replace │

│ ├── Search/replace blocks │

│ └── Unified diff │

└──────────────────────────────────┘7.2 Why Aider Matters

The killer feature: model freedom. While every commercial tool locks you into specific models or APIs, Aider lets you bring any model:

# Use GPT-4o

aider --model gpt-4o

# Use Claude 3.5 Sonnet

aider --model claude-3-5-sonnet-20241022

# Use a LOCAL model via Ollama (zero data leaves your machine)

aider --model ollama/deepseek-coder-v2This last option — Aider + Ollama with a local model — is the only mainstream solution for fully private AI-assisted coding. No API calls, no data transmission, no external dependencies.

7.3 Git-Native Workflow

Every edit Aider makes is automatically wrapped in a git commit with a descriptive message. This means:

- Full undo capability (

git revert) - Clear audit trail of AI-generated changes

- Easy comparison: human commits vs. AI commits

🔧 Engineer’s Note: Aider + local Ollama = fully private AI coding. Zero data leaves your machine. If you’re working on classified code, pre-patent IP, or in a regulated industry with strict data residency requirements, this is currently the only viable option. It won’t be as good as GPT-4o or Claude 3.5 — but it’s private.

8. Head-to-Head Comparison Matrix

| Feature | Copilot | Cursor | Claude Code | Windsurf | Aider |

|---|---|---|---|---|---|

| Interface | IDE plugin | IDE fork | Terminal CLI | IDE fork | Terminal CLI |

| Multi-file editing | Limited | ✓ Composer | ✓ Agentic | ✓ Cascade | ✓ Git-aware |

| Model choice | GPT-4o fixed | Multi-model | Claude only | Limited | 20+ models |

| Codebase indexing | Limited | ✓ Embeddings | ✓ Full scan | ✓ | ✗ |

| Shell execution | ✗ | Limited | ✓ Full | ✓ Cascade | ✗ |

| Open source | ✗ | ✗ | ✗ | ✗ | ✓ |

| Local models | ✗ | ✗ | ✗ | ✗ | ✓ |

| Project config | — | .cursorrules | CLAUDE.md | — | .aider.conf |

| Price/month | $10-19 | $20+ | API cost | Free-$15 | Free (API cost) |

| Enterprise ready | ✓ SOC 2 | Privacy mode | API terms | In progress | Self-managed |

The Decision Framework

What's your task?

│

├── Quick single-line completion

│ └── Copilot or Cursor (Tab)

│

├── Chat-driven single-file edit

│ └── Cursor Chat or Copilot Chat

│

├── Multi-file feature implementation

│ ├── Want visual diffs? → Cursor Composer

│ └── Want IDE agent? → Windsurf Cascade

│

├── Complex multi-step task (with tests)

│ └── Claude Code (terminal agent)

│

├── Need model flexibility / open source

│ └── Aider

│

└── Need 100% data privacy (local models)

└── Aider + Ollama9. Effective Prompting for Code Generation

Everything from AI 01 applies to code prompting — but with domain-specific refinements.

9.1 The Specificity Hierarchy

When prompting for code, specificity stacks in layers. Each layer narrows the latent space (AI 01 §1.1) further:

Layer 1: Language + version "TypeScript 5.3"

Layer 2: Framework + version "Next.js 14 App Router"

Layer 3: Project conventions "Zustand for state, Zod for validation"

Layer 4: Error handling "Return Result<T, Error> pattern"

Layer 5: Test expectations "Write vitest tests with >80% coverage"Missing any layer forces the model to guess — and guesses in code are bugs.

Real example — Same task, different specificity levels:

// ❌ Layer 1 only (language):

"Write a function to fetch user data"

// Result: Uses fetch(), returns any, no error handling

// ✅ All 5 layers:

"Write a TypeScript 5.3 function in Next.js 14 App Router that:

- Fetches user data from /api/users/:id using our apiClient (see @file:lib/api.ts)

- Returns Result<User, ApiError> (our error pattern from @file:types/result.ts)

- Handles 404 (user not found) and 500 (server error) separately

- Write a vitest unit test with mock server (msw)"

// Result: Type-safe, follows project patterns, testable9.2 Context Packaging with @-mentions

In Cursor, strategic @-mention usage is the difference between mediocre and excellent results:

❌ Bad: "Fix the login bug"

✅ Good: "@file:auth/login.ts @file:middleware/session.ts @file:types/user.d.ts

The login function throws a 500 error when the session cookie is expired.

The error occurs at line 47 where we call validateSession().

Fix it to return a 401 with a clear error message instead."9.3 The Prompt Evolution Pattern

Most developers improve their AI prompts through a predictable progression:

Stage 1: "Write me a login page"

→ Generic HTML, no framework, no styling

Stage 2: "Write a Next.js login page with React Hook Form and Zod validation"

→ Better, but uses default styling, JWT pattern you don't use

Stage 3: Same prompt + @file:components/Form.tsx @file:lib/auth.ts

→ Matches your existing component library and auth pattern

Stage 4: Same prompt + .cursorrules with project conventions

→ Automatically uses your design system, error handling,

naming conventions, testing patterns — EVERY TIME🔧 Engineer’s Note: Stage 4 is the unlock. Most developers plateau at Stage 2-3, manually adding context each time. The jump to Stage 4 (

.cursorrules/CLAUDE.md) is where AI-assisted coding goes from “useful” to “transformative” — because you stop repeating yourself.

9.4 Anti-Patterns to Avoid

| ❌ Anti-Pattern | Why It Fails | ✅ Better Approach |

|---|---|---|

| ”Fix this” | No context, no constraints | ”Fix the null reference at line 42. The user variable can be undefined when…" |

| "Make it work” | No success criteria | ”Make this function return the correct sum. Expected: sum([1,2,3]) === 6" |

| "Refactor this code” | Too vague, endless possibilities | ”Extract the database logic from this controller into a separate repository class" |

| "Write tests” | Which tests? What coverage? | ”Write vitest unit tests for calculateDiscount() covering: 0%, 50%, 100% discounts and negative prices” |

| Over-specifying | Constrains the model too much | Give the AI room to suggest approaches you haven’t thought of |

9.5 Advanced .cursorrules Patterns

Beyond basic project conventions, .cursorrules can encode sophisticated patterns:

# Advanced .cursorrules patterns

## Error Handling Standard

All functions that can fail MUST return Result<T, AppError>.

Never throw exceptions. Use the Result type from @/types/result.ts.

## Database Access Pattern

Never write raw SQL. All database access goes through Prisma.

Always use transactions for multi-table writes.

Always include .select() to avoid over-fetching.

## API Response Standard

All API responses follow: { data: T | null, error: AppError | null, meta: {} }

HTTP 200 for success, 400 for validation, 401 for auth, 500 for server.

Never return stack traces in production error responses.

## Testing Requirements

Every public function needs at least:

- 1 happy path test

- 1 error case test

- 1 edge case test (null, empty, boundary values)

Use vitest + msw for API mocking. No real network calls in tests.🔧 Engineer’s Note: The single biggest productivity gain in AI-assisted coding isn’t learning a new tool — it’s writing a good

.cursorrulesorCLAUDE.mdfile. Front-loading your project’s conventions, patterns, and constraints into a persistent system prompt saves thousands of tokens across every interaction and produces dramatically more consistent output.

10. AI-Assisted Code Review & PR Analysis

AI isn’t just about writing code — in enterprise environments, reviewing code is equally important. And AI is rapidly becoming the first line of defense.

10.1 Three Levels of AI Code Review

Level 1: Static Analysis Enhancement

AI augments existing linters → catches logic errors linters can't see

"This null check is missing because user.profile is optional in the type"

Level 2: PR Review Agent

Agent reads PR diff → analyzes for bugs, performance, security

→ Generates review comments (like a senior engineer's code review)

"This SQL query in line 87 is vulnerable to injection. Use parameterized queries."

Level 3: Continuous Codebase Health

Agent periodically scans entire codebase → finds tech debt, outdated patterns

"17 files still use the deprecated v2 API client. Migration path available."10.2 Tools for AI Code Review

| Tool | Approach | Best For |

|---|---|---|

| Copilot PR Review | GitHub-native, auto-generates PR summary | Quick summary of changes |

| CodiumAI (Qodo) | Generates test cases + behavior analysis | Test coverage gaps |

Cursor @diff | Use @diff to analyze PR differences | Manual review with AI assist |

| Custom Agent | Build with AI 05 Agent + AI 04 MCP → GitHub API | Full control, custom rules |

Connection to AI 05: A Code Review Agent is the most practical “single-purpose agent” for beginners. It’s a natural first project when you reach the Agent Architecture article — reading a PR diff, analyzing it, and posting comments.

🔧 Engineer’s Note: AI Code Review can’t replace human review, but it’s an excellent first filter. Let AI catch the low-level errors — null checks, SQL injection, deprecated APIs, missing error handling — while human reviewers focus on architecture decisions and business logic. This is the same “AI pre-filter + human judgment” pattern we’ll see in AI 08’s monthly close process.

11. Cost, Privacy & Enterprise Security

This is where the rubber meets the road for enterprise adoption. The question isn’t whether these tools are useful — it’s whether they’re safe to use at work.

11.1 ⚠️ The Enterprise Code Leak Risk

Here’s the scenario that keeps CISOs up at night:

Developer's daily behavior:

Uses personal Cursor account on company laptop

→ Every code snippet sent to external API for processing

→ Company's proprietary algorithms exposed to third-party servers

→ Code may be used for model training (depending on terms)

→ Intellectual property leakage → legal liabilityThis is not hypothetical. Samsung banned ChatGPT after engineers accidentally leaked source code. Apple, Amazon, and JPMorgan have all restricted AI coding tools.

11.2 Enterprise Compliance Matrix

| Tool | SOC 2 | No Training on Code | Privacy Mode | Data Residency |

|---|---|---|---|---|

| Copilot Business | ✓ Type II | ✓ Guaranteed | N/A (default) | US/EU options |

| Cursor Business | In progress | ✓ Privacy Mode | ✓ Explicit opt-in | Via API provider |

| Claude Code | Via Anthropic API | ✓ API terms | N/A | Via API provider |

| Windsurf | In progress | Varies by tier | — | Via API provider |

| Aider + Local | N/A (self-managed) | ✓ (no API calls) | ✓ Complete | Your infrastructure |

11.3 Compliance Frameworks That Apply to AI Coding Tools

If your organization operates under regulatory or industry compliance, several frameworks now explicitly address AI tool usage:

Regulatory Landscape for AI-Assisted Development:

┌─────────────────────────────────────────────────┐

│ NIST AI RMF (AI Risk Management Framework) │

│ ─ Govern: establish AI use policies for dev teams │

│ ─ Map: identify where AI touches code pipeline │

│ ─ Measure: track AI-generated code percentage │

│ ─ Manage: review/audit procedures for AI code │

├─────────────────────────────────────────────────┤

│ EU AI Act (2024) │

│ ─ AI coding tools = "general purpose AI" │

│ ─ Transparency: disclose AI-generated code │

│ ─ High-risk systems: extra scrutiny on AI code │

│ in safety-critical applications (medical, auto)│

├─────────────────────────────────────────────────┤

│ ISO 27001 + SOC 2 │

│ ─ Data processing: where does code data go? │

│ ─ Vendor management: AI tool = third-party vendor│

│ ─ Access control: who can use AI tools? │

└─────────────────────────────────────────────────┘🔧 Engineer’s Note: ISO 27001 auditors are now asking: “Does your development team use AI coding tools?” If the answer is “yes” but there’s no formal usage policy, this is a major audit finding. Minimum requirements: (1) an AI Tool Usage Policy document, (2) an approved tools list, (3) a code review process confirming all AI-generated code has been human-reviewed.

11.4 The Compliance Decision Tree

Is your code confidential or regulated?

│

├── Yes ─→ Is there a company AI policy?

│ │

│ ├── Yes ─→ Follow it. Use approved tools only.

│ │ Options: Copilot Business (SOC 2)

│ │ Cursor Privacy Mode

│ │ Aider + local model

│ │

│ └── No ──→ 🚨 FLAG THIS IMMEDIATELY.

│ You need a policy BEFORE using AI tools.

│ Using AI coding tools without a policy

│ = personal legal liability risk.

│

└── No ──→ Any tool works. Personal preference.11.5 IP and Licensing Risks

Beyond data privacy, there’s the question of generated code provenance:

- Can the AI generate code that’s copyrighted? (Ongoing litigation — Doe v. GitHub class action)

- Does the output come with license obligations? (Copyleft contamination risk)

- Who owns AI-generated code? (Varies by jurisdiction — US Copyright Office says “no copyright for purely AI-generated works”)

🔧 Engineer’s Note: Before using ANY AI coding tool at work, verify your company’s AI Use Policy. No policy? Don’t use the tools — or push for one to be created. Using AI coding tools without a policy isn’t just a technical risk — it’s a legal risk. Cursor’s Privacy Mode and Copilot Business’s no-retention guarantee are the minimum compliance bar for enterprise use.

12. Integration into CI/CD

AI doesn’t stop at your local editor. It’s increasingly embedded in the entire development pipeline.

12.1 AI in Code Review Pipelines

Developer pushes PR

│

▼

CI Pipeline triggers:

├── Standard: lint → test → build

└── AI-Enhanced:

├── Copilot PR Summary (auto-generated)

├── AI security scan (beyond SAST patterns)

├── AI test suggestion (uncovered edge cases)

└── AI documentation check (outdated docs?)12.2 AI in Test Generation

Tools like CodiumAI can automatically generate test suites for new code:

- Analyze function signature and implementation

- Identify edge cases (null inputs, boundary values, error paths)

- Generate test cases with assertions

- Integrate into CI as a “minimum coverage” check

12.3 AI in Documentation

The most underrated use case: keeping documentation in sync with code.

Code changes → AI detects affected docs → generates update PR

"README.md section 'API Endpoints' is outdated.

The /users endpoint now returns a pagination object.

Suggested update: [diff]"12.4 The Human-in-the-Loop Principle

Despite all this automation, one principle remains non-negotiable: a human must approve every AI-generated change that reaches production.

AI generates → Human reviews → Ship to production

↑ ↑

NEVER skip NEVER skipThis is the same pattern across the entire AI series — from AI 02’s CI/CD to AI 05’s agent guardrails to AI 08’s monthly close process. AI proposes, humans approve.

13. Key Takeaways

Let’s compress this article into the insights that matter:

-

The developer’s role is shifting from “writer” to “reviewer.” AI generates code; you evaluate whether it’s correct, efficient, and secure. Senior engineers benefit most — reviewing code requires judgment that comes from experience. (§1)

-

Code LLMs use Fill-in-the-Middle training, not just left-to-right prediction. This is why they can complete code inside a function body, not just at the end. (§2.1)

-

Context assembly is the invisible differentiator. The quality of AI suggestions depends on which files the tool includes in the context window. Cursor’s

@-mentionsgive you explicit control. (§2.2) -

Five tools, four levels of capability. Tab completion (Copilot), Chat+Edit (Cursor), Multi-file Compose (Cursor Composer/Windsurf Cascade), Agentic Coding (Claude Code). Match the tool to the task complexity. (§3-8)

-

.cursorrulesandCLAUDE.mdare your highest-ROI investment. 30 minutes of upfront configuration saves thousands of tokens and produces dramatically more consistent code. It’s AI 01’s Persona concept applied to your project. (§4.3, §5.3) -

AI Code Review is the practical first step to AI agents. Let AI catch low-level bugs while humans focus on architecture and business logic. “AI pre-filter + human judgment” is the pattern of the AI era. (§10)

-

Enterprise security is non-negotiable. Verify your company’s AI Use Policy before using ANY tool at work. Copilot Business (SOC 2) or Aider + local model for regulated environments. No policy = don’t use the tools. (§11)

-

Aider + local models is the only fully private option. If your code can’t leave your machine, this is it. Worse quality, but complete privacy. (§7)

-

Human-in-the-loop is non-negotiable. AI proposes, humans approve. In CI/CD, in code review, in every pipeline. Never auto-deploy AI-generated code without human review. (§12.4)

Series Navigation:

← Previous: AI 01: Prompt Engineering — Programming the Probabilistic Engine

→ Next: AI 03: RAG & Vector Databases — Teaching LLMs to read your private data.

You now have the tools to write code with AI at every level — from single-line completions to multi-file autonomous refactoring. You know which tool to use when, and how to keep your code safe.

But there’s a fundamental constraint we haven’t addressed: every AI coding tool relies on the LLM’s pre-trained knowledge. When your project uses a custom internal framework, proprietary APIs, or domain-specific conventions that don’t exist in the model’s training data — even the best prompting won’t help.

To solve that, we need to give the model access to your knowledge. That’s what Retrieval-Augmented Generation (RAG) does. And that’s the story of AI 03.